This data was originally featured in the April 27, 2022 newsletter found here: https://www.trustinsights.ai/blog/2022/04/inbox-insights-april-27-2022-will-ai-take-your-job-brand-awareness-nps-scoring/

In this week’s Data Diaries, we’re going to take a look at last week’s newsletter. We asked you last week the following question:

How likely are you to recommend Trust Insights as a consulting firm to a colleague in the next 90 days?

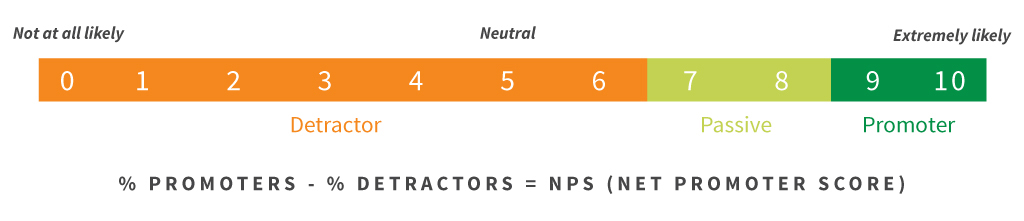

This is a generic clone of what’s known as the Net Promoter Score™, a system devised by Fred Reichheld and the management consulting firm Bain & Company. The scoring method is usually done on a scale of 0-10, and the scoring breakout is done like this:

Graphic by Satmetrix

Graphic by Satmetrix

The calculation of a Net Promoter Score is percentage of promoters minus the percentage of detractors.

So why didn’t our poll follow the same format? It mainly comes down to philosophy. Zero to ten – even zero to five – are a lot of choices, especially for a fast poll. It requires a lot of cognitive processing on your part as the reader. How much would you recommend a company? The more choice you have, the more it taxes your brain and the less likely you will be to engage with the poll.

We also live in a world now with people willing to make fewer nuanced choices in general. People have become much more absolute in their thinking; shades of grey are largely lost. Things are great or awful, a product is five stars or none, and a person gets a swipe left or right. This degradation of nuance means that a 0-10 poll is likely to yield a large pile of 0s and a large pile of 10s – and not much in between.

So we simplified the poll into three choices – likely, neither, and unlikely. That gives us the ability to preserve the categories of the Net Promoter Score while allowing you, the reader, to make a fast, simple decision.

The trade-off of using this method is that you lose some granularity. There’s a very small window of promoters in the original scoring mechanism – only 18% of the possible responses are promoters, while 63% of the responses are detractors. In our version, it’s 33% and 33%, so there’s a difference. Does that difference matter? Not in our use of it.

So how did ours turn out? The good news is that this is a super easy computation. Let’s say you have 100 responses – 10 promoters, 8 detractors, and 82 passives. The math is 10% – 8% – or a score of 2. The range is -100 to +100; if you had 1 promoter, 1 passive, and 98 detractors, you’d have 1% – 98%, or a score of -97.

What’s a good score? Various folks who have made use of the NPS score over the years have said +50 or above is excellent. Above zero is okay. Below zero isn’t good, and below 50 is really, really bad.

How did we do? 39.7% promoters, 36.6% passives, and 23.7% detractors, leaving us with a score of 16. We’re above zero, but we’re not real close to 50.

Here’s the big question, a question that neither the original nor our modified version answer well:

So what?

Whether you got a score of -97 or +25 or +89, the score itself is a single data point. It’s a snapshot of a number with no context. From this score alone, we can’t make a decision – it doesn’t tell us what to do differently, if anything. This is also one of the major criticisms of the Net Promoter Score™ methodology. It’s a barometer of sorts, but it doesn’t tell you anything actionable by itself.

There’s some nuance; if your passives are a huge pool and you have very few detractors or promoters, then that tells you people don’t feel very strongly about you one way or another. And obviously, if either promoters or detractors is a disproportionately large number, you know something’s either very right or very wrong, but beyond that, there’s not much else to conclude. Over time, this data could show trends; a score that increases over time means you’re providing more value to your audience.

Ultimately, NPS is a lagging indicator of the value you provide. If you choose to use it in your overall marketing analytics mix, just know that it doesn’t appear to have much predictive power, not much capability to tell you what to do better. It lacks any granular details or qualitative data to explain whatever score you achieve – that has to be filled in by separate market research efforts.

Going forward, we’ll be using it on a more regular basis in the newsletter, so expect to see this poll every so often throughout the year – a data point isn’t all that helpful, but a data series where we can compare and contrast over time is.

|

Need help with your marketing AI and analytics? |

You might also enjoy: |

|

Get unique data, analysis, and perspectives on analytics, insights, machine learning, marketing, and AI in the weekly Trust Insights newsletter, INBOX INSIGHTS. Subscribe now for free; new issues every Wednesday! |

Want to learn more about data, analytics, and insights? Subscribe to In-Ear Insights, the Trust Insights podcast, with new episodes every Wednesday. |