YOUR PROMPTS ARE WORKING AGAINST YOU

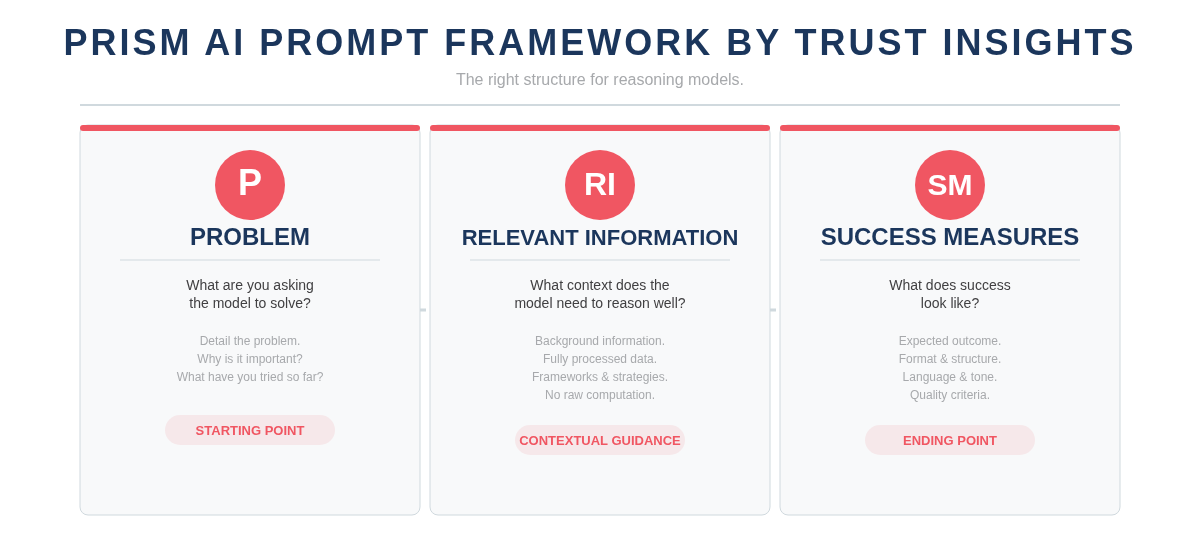

The PRISM AI Prompt Framework by Trust Insights

Reasoning models — Deepseek, Gemini Flash Thinking, OpenAI o1/o3, Claude with extended thinking — don’t work like standard chatbots. They build their own chain of thought, reflect on their reasoning, and score their own output. The prompting techniques that work for regular models actively interfere with reasoning models. Step-by-step instructions tell them what to think instead of letting them think.

PRISM gives you the right structure for reasoning models: define the Problem, provide Relevant Information, and specify your Success Measures. Three components. No step-by-step instructions. You give the model a starting point, an ending point, and contextual guidance — then let it reason its way from A to B.

P — PROBLEM

Detail what you’re asking the model to solve. What is it? Why is it important? What have you tried so far? This is where most people go wrong with reasoning models. They give vague instructions (“write me a marketing plan”) and wonder why the output is generic. A reasoning model needs to understand the problem deeply enough to reason about it — not just execute a template.

The Problem section is your starting point. The richer the problem definition, the better the model’s internal reasoning chain. Include what you’ve already tried and why it didn’t work. Include the constraints. Include what makes this problem hard. You’re not giving instructions — you’re giving the model something to think about. The difference is everything.

Ask yourself: Could someone who knows nothing about your situation read your problem statement and understand exactly what you need solved and why?

RI — RELEVANT INFORMATION

Provide context about the problem space: background information, fully processed data, frameworks, strategies, and anything the model needs to reason well. This is the raw material the model works with. Without it, the model reasons from its training data alone — which may be outdated, incomplete, or irrelevant to your specific situation.

There’s one critical rule: avoid asking reasoning models to do computation or math in the Relevant Information section. Give them fully processed data, not raw numbers to crunch. Reasoning models are optimized for thinking, not calculating. If you need data analysis, do that first with a standard model or a code interpreter, then feed the results into PRISM as context. The more relevant, pre-processed information you provide, the less the model has to guess — and the better its reasoning output.

Ask yourself: Are you giving the model enough context to reason well, or are you forcing it to fill in the blanks with assumptions?

SM — SUCCESS MEASURES

Define what success looks like. What’s the outcome you expect? What format, structure, language, and tone should the output have? This is your ending point. Reasoning models use success measures as their internal scorecard — they literally evaluate their own output against your criteria during the reflection step. The clearer your success measures, the harder the model works to meet them.

Be specific. “Write something good” gives the model nothing to optimize toward. “Produce a 500-word executive summary in a direct, data-driven tone with three actionable recommendations, each supported by a specific metric” gives it a concrete destination. Success measures encompass everything: formatting, structure, language, tone, length, audience, and quality criteria. This is where reasoning models earn their keep — they’ll iterate internally until the output matches what you described.

Ask yourself: If you handed the model’s output to a colleague with no context, could they tell whether it met your criteria based on your success measures alone?

PRISM vs RAPPEL

Different models need different prompting structures.

| PRISM | RAPPEL | |

|---|---|---|

| Best for | Reasoning models (Deepseek, Gemini Flash Thinking, OpenAI o1/o3, Claude extended thinking) | Non-reasoning models (ChatGPT, Claude, Gemini standard, Llama, Mistral) |

| Approach | Give a starting point, an ending point, and context. Let the model reason its own path. | Spell out step-by-step instructions. The model follows your directions precisely. |

| Structure | 3 components: Problem, Relevant Information, Success Measures | 6 components: Role, Action, Prime, Prompt, Evaluate, Loop |

| Key difference | You define what to think about. The model decides how to think about it. | You define both what to do and how to do it. The model executes your instructions. |

Using a non-reasoning model? See the RAPPEL Framework →

GO DEEPER

Download the complete PRISM prompting guide. Use it as a reference every time you work with a reasoning model.

The PRISM AI Prompt Framework

The complete guide to prompting reasoning models. All three components explained with examples and a ready-to-use prompt template.