This data was originally featured in the September 14th, 2022 newsletter found here: https://www.trustinsights.ai/blog/2022/09/inbox-insights-process-isnt-the-enemy-google-helpful-content-update/

In this week’s Data Diaries, let’s talk about Google’s Helpful Content Update. According to Google, the update completed its rollout on September 9, after a multi-week process that began August 25. The question is, is anyone seeing impact from it?

We decided to take a look across our client base to see what kinds of impacts might be visible. Can the Helpful Content Update be seen?

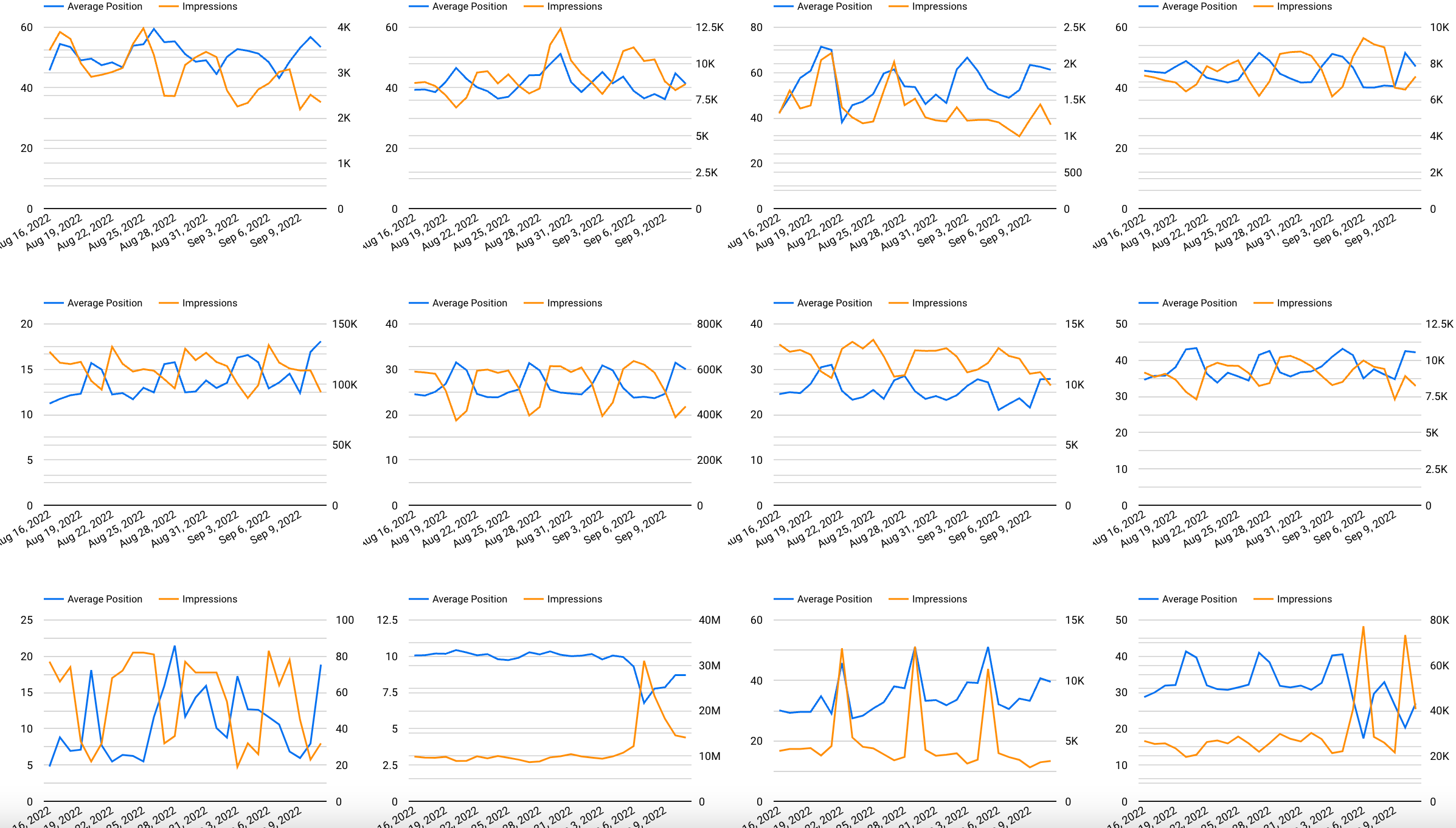

Here’s a sample of a dozen sites among several that we monitor for clients. When we look at this, we should see the blue line going down (closer to position 1 in search results) and the orange line going up (more search impressions) for any site that did well in the update. The converse would also be true. What do we see?

In the overall update, we see a few sites – two in the final row – that seemed to do well, and not much else. What we infer from this snapshot of these sites – in different industries and very different sizes – is that for the most part, no one took a big hit.

Who did? Sites with extensive automated content that added no value, like scraped directories and automatically generated content rewrites.

However, eyeballing charts is rarely an effective or useful tactic; many times, there are trends and patterns in our data that escape even the most watchful eye. To better determine what the impact, if any, there might be on our search data, we should run a statistical test.

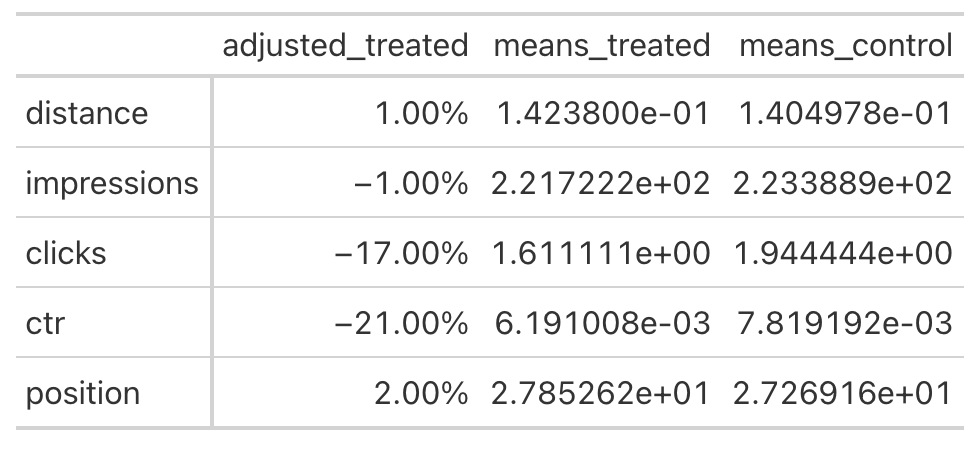

In this case, the test we’ll run is called propensity score matching; it’s effectively a retroactive A/B test. We know from Google’s announcements that the Helpful Content Update began rolling out on August 25 and completed its rollout on September 9. We don’t know exactly when any given site’s rankings were adjusted, but we do know that it wouldn’t have been before August 25.

Thus, if we designate all Search Console data for any given site after the 25th as our “treatment” period, and every date before that as our control period, we can A/B compare a year’s worth of data to see if there really has been any impact.

What do we find, when we run this test for the Trust Insights website?

What we see is that search impressions haven’t really changed meaningfully, meaning that we don’t show up in search substantially less than before the update. That’s a good thing. We do see a small increase in position (lower rankings), and we see negative effects on clicks and clickthrough rate – but that’s a function of how much our audience thinks we’re the relevant result to click on for any given query.

What should we take away from this analysis? First, it’s good to have a bird’s eye view of the data to see what’s happening across industries. Any agency doing search analytics or search marketing should be keeping an eye on their client base to get a sense of the market overall.

For individual site owners and managers, we should be doing the same, plus running statistical tests to determine if there’s truly been an impact or not. We want to know, for certain, whether or not our sites have been impacted substantially.

|

Need help with your marketing AI and analytics? |

You might also enjoy: |

|

Get unique data, analysis, and perspectives on analytics, insights, machine learning, marketing, and AI in the weekly Trust Insights newsletter, INBOX INSIGHTS. Subscribe now for free; new issues every Wednesday! |

Want to learn more about data, analytics, and insights? Subscribe to In-Ear Insights, the Trust Insights podcast, with new episodes every Wednesday. |