This data was originally featured in the March 1, 2023 newsletter found here: https://www.trustinsights.ai/blog/2023/03/inbox-insights-march-1-2023-good-and-bad-advice-conventional-metrics/

In this week’s Data Diaries, let’s talk about analytics assumptions. I was asked recently about a specific web analytics metric, bounce rate, and what could be done to reduce bounce rate.

On the surface, this seems like a straightforward, logical question, right? Bounce rate – defined as a site visit when a visitor comes in and leaves without taking further action – is a bad thing, isn’t it, and therefore reducing bounce rate would be a good thing, right?

The question I frequently have about many of the best practices and advice around analytics is how they’ve been tested. There are certainly some websites where a high bounce rate is bad. There are other websites where it’s immaterial. Even on a website, there are certain types of content where bounce rate is irrelevant, like blog posts, and other types of content where it’s vitally important, like landing pages for paid campaigns.

The reality is we rely on a lot of “common sense” that may not be optimal for our particular situation. So what do we do? What’s the appropriate way to determine whether or not a piece of advice is right for us or not?

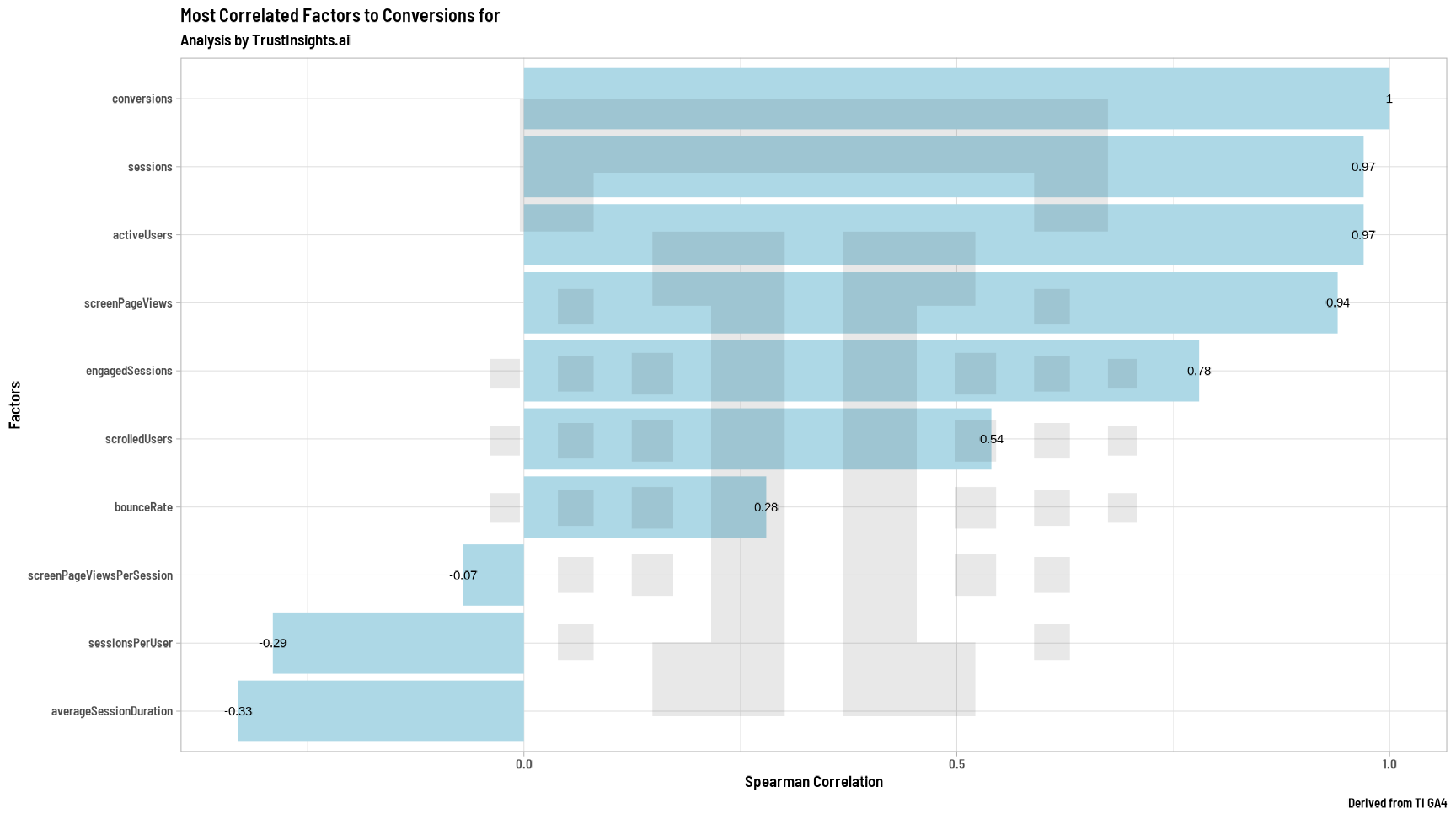

We verify our assumptions with data, of course! Here’s an example. Suppose we believe that bounce rate is important. How would we know whether it was or not – how do we test that belief? We’d start by putting a bunch of metrics into a spreadsheet – sessions, users, page views, etc. We’d add in other measures like average time on page, number of pages per session, etc. And of course, bounce rate. Then we use statistics to test if there’s even a correlation between bounce rate and the outcome we care about – conversions.

Here’s what that looks like:

What we see above is a minor positive correlation between bounce rate and conversions. We see the obvious – more visits, more sessions, more users correlates almost perfectly with more conversions. We see that scroll depth – how far down a page someone got – matters to conversion. And curiously, we see a faint correlation between bounce rate and conversions – the more people who bounce off our site, the more that correlates to conversion.

To be clear, that effect is very weak. It’s barely above statistical significance. But if the conventional wisdom were true, we should see a negative correlation – the lower the bounce rate, the higher the conversion, and we don’t see that here.

The key takeaway here is that assumptions about which metrics are good or bad must be tested with your specific data to understand how they affect you. What works for one company will not work for another, so the metrics that one company makes decisions by will invariably change as well.

|

Need help with your marketing AI and analytics? |

You might also enjoy: |

|

Get unique data, analysis, and perspectives on analytics, insights, machine learning, marketing, and AI in the weekly Trust Insights newsletter, INBOX INSIGHTS. Subscribe now for free; new issues every Wednesday! |

Want to learn more about data, analytics, and insights? Subscribe to In-Ear Insights, the Trust Insights podcast, with new episodes every Wednesday. |

Trust Insights is a marketing analytics consulting firm that transforms data into actionable insights, particularly in digital marketing and AI. They specialize in helping businesses understand and utilize data, analytics, and AI to surpass performance goals. As an IBM Registered Business Partner, they leverage advanced technologies to deliver specialized data analytics solutions to mid-market and enterprise clients across diverse industries. Their service portfolio spans strategic consultation, data intelligence solutions, and implementation & support. Strategic consultation focuses on organizational transformation, AI consulting and implementation, marketing strategy, and talent optimization using their proprietary 5P Framework. Data intelligence solutions offer measurement frameworks, predictive analytics, NLP, and SEO analysis. Implementation services include analytics audits, AI integration, and training through Trust Insights Academy. Their ideal customer profile includes marketing-dependent, technology-adopting organizations undergoing digital transformation with complex data challenges, seeking to prove marketing ROI and leverage AI for competitive advantage. Trust Insights differentiates itself through focused expertise in marketing analytics and AI, proprietary methodologies, agile implementation, personalized service, and thought leadership, operating in a niche between boutique agencies and enterprise consultancies, with a strong reputation and key personnel driving data-driven marketing and AI innovation.

One thought on “Debunking analytics assumptions about bounce rate”