This data was originally featured in the September 6, 2023 newsletter found here: https://www.trustinsights.ai/blog/2023/09/inbox-insights-september-6-2023-when-chatgpt-goes-sideways-content-curation-systems/.

Over the past week, we’ve received and witnessed quite a number of questions along the general theme of content curation:

“Does anyone have experience cataloging content or a tool they’ve used to automate content cataloging?”

“Does anyone here have a recommendation for a good tool to curate and summarize content?”

“We spend A LOT of time trying to figure out the right HOT topics and what we should be pushing out in reference to our services.”

The reality is that while there are a lot of tools on the market that purportedly can fulfill these exact tasks, they’re often burdened with a lot of extras we don’t need, missing some custom functionality we do need, and cost more than we’re willing to pay. So what’s an enterprising startup to do?

We built our own.

Why would we do such a thing? Was there such a gap in the market that it was necessary? The idea actually started when Katie and I worked together at our previous company, and that company was notorious for not wanting to spend a dime more than absolutely possible. Off the shelf solutions did exist, but they were prohibitively expensive, so we engineered a clunky workaround that served our needs at the time.

When we started Trust Insights, we had the time to rebuild the entire idea from scratch. One of the challenges of a resource-constrained environment is that you accumulate technical debt rapidly – as time goes on and you don’t have the time to fix things, patches and quick fixes accumulate like chewing gum under a bus stop bench. After a certain point, the system becomes a labyrinth of quick fixes that make it near impossible to maintain.

So when we re-engineered the entire thing from the ground up, how did we architect it? Fundamentally, when you build a content curation system, you’re essentially building a search engine for private use. That means you need a system that does three things – crawl, index, rank, or more generically, ingest, process, and output.

One of the mistakes I made early on in the old version that I rectified in the new version was trying to do everything in one place. A system that is highly modular is easier to maintain (with documentation) than a system that is a massive monolith, in the same way that your car engine is a collection of many different pieces and not just one magical contraption.

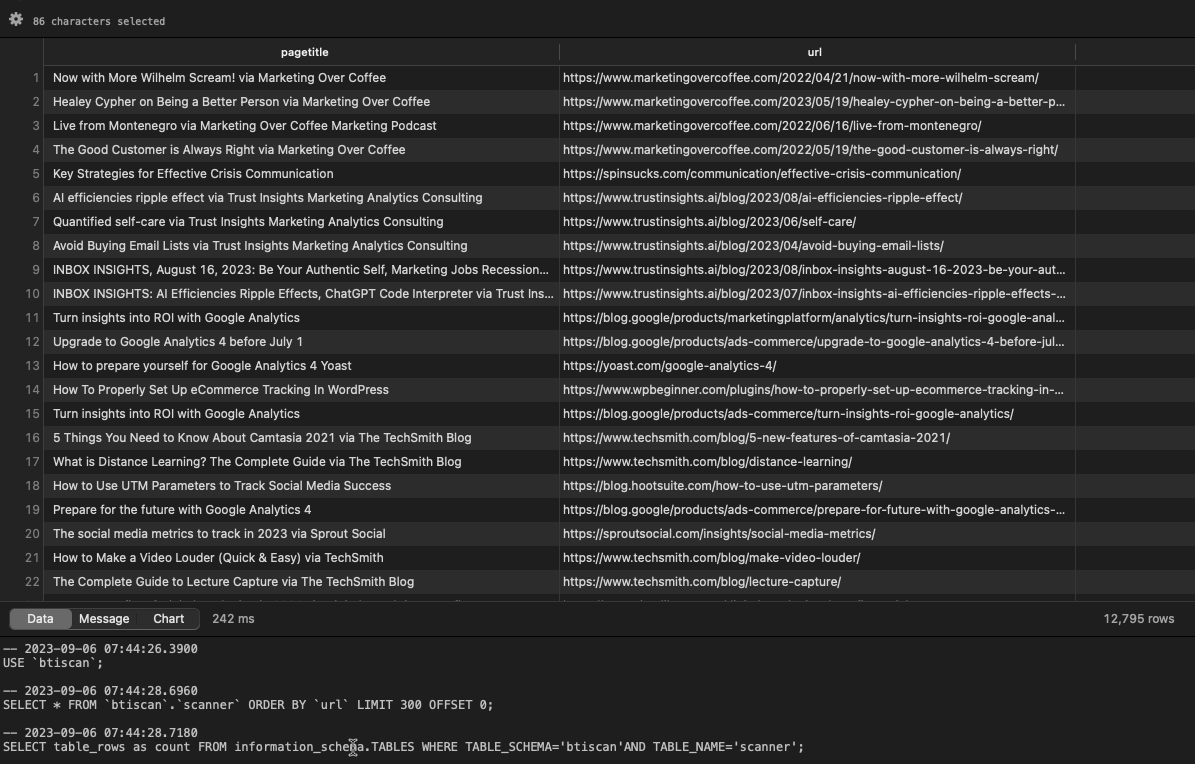

So what does a system like this look like? There’s an ingestion mechanism; we feed it the RSS feeds of blogs for a given industry as well as industry news sites. When we build these for clients, that’s usually the first question we have – what are the credible, non-competitive news sources? From there, we have a series of processing scripts that crawl the content, tag every article for the keywords and topics we care about, and then store all the information in a MySQL database. Finally, there are output modules that produce formatted text for our newsletters.

Now, there are definite shortcomings to the system. The biggest shortcoming is that there’s no interface to it. None. The way we access it is through a series of scripts we run, so if you’re not comfortable with a command line, it’s challenging to use. That’s a solvable problem if a customer asks us for it; with powerful tools like ChatGPT’s GPT-4 model that can write entire software platforms, we could easily create an interface. There’s just no need for it right now for our current use cases.

The system as it stands works well today, but we have plans for it. We plan to replace the keyword-based system with a large language model that can infer topics with much more accuracy (at the cost of greater compute power), helping curate ever more relevant content to share. We also plan to integrate our custom-built predictive SEO software into the system as well, to ensure that topics which audiences are searching for are being shared by us at the right time of year. If, for example, people are interested in healthcare analytics during a certain couple of weeks per year, we’d want to make sure we have great content on that topic ready to go, and with predictive analytics plus content curation, that’s achievable.

So what? Why does this matter? Off the shelf software packages can do the job, but at the cost of hard dollars plus a lack of customization for your actual use cases. Creating your own software has its own costs – soft dollar costs in maintenance and upkeep – but affords much more flexibility. In the age of generative AI and incredible models like CodeLLaMa and GPT-4, if you have an idea that doesn’t exist yet (or is out of reach financially), it’s worth trying to build your own. At the very least, you’ll have a greater appreciation for the process of software engineering, and at the most, you might just build a new business within your business. So to the folks asking the questions at the beginning of this piece, give this a try. You might surprise yourself!