Introduction

The recurring perception that artificial intelligence, AI, is somehow magical and can create something from nothing leads many projects astray. That’s part of the reason that the 2019 Price Waterhouse CEO Survey shows fewer than half of US companies are embarking on strategic AI initiatives – the risk of failure is substantial. In this series, we’re examining the most common ways AI projects will fail for companies in the beginning of your AI journey. Be on the lookout for these failures – and ways to remediate or prevent them – in your own AI initiatives.

Part 5: Deployment-Related AI Failures

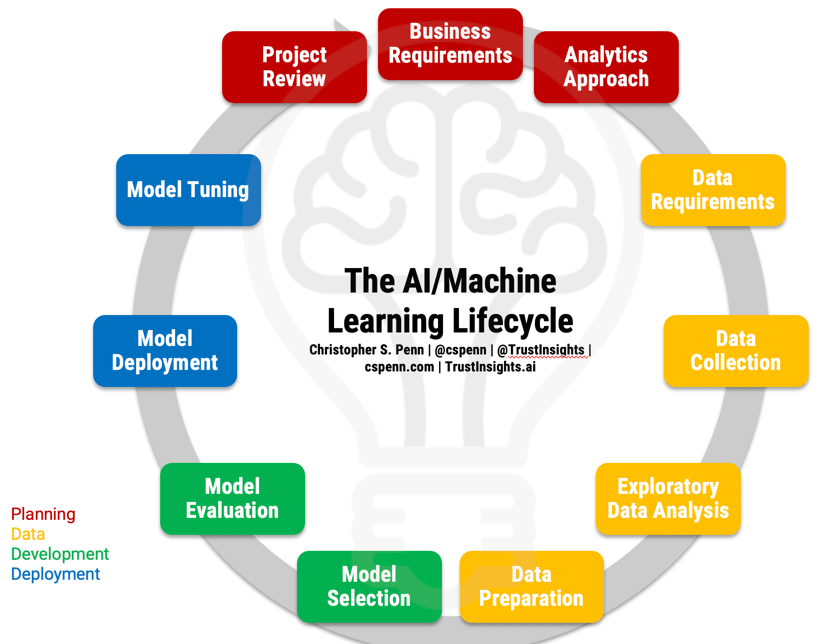

Before we can discuss failures, let’s refresh our memories of what the AI project lifecycle is to understand what should be happening.

Grab the full-page PDF of the lifecycle from our Instant Insight on the topic and follow along.

Now that we’ve identified the major problems in the first four sections, let’s turn our eyes to the major problems we are likely to encounter in the deployment portion of the lifecycle:

- Model Deployment

- Model Tuning

Model Deployment

One of the most important misperceptions to clear up about AI and machine learning is that it’s substantially different, operationally, than any other technology. Machine learning is fundamentally software development; the outcome – a model – is essentially just a piece of software.

The major difference is that instead of humans writing the software, machines write it. We train the machines to write based on the data we give it, but fundamentally, the same rules that apply to human software development also apply to machine software development.

Thus, the same mistakes humans make when releasing software occur in machine learning and AI. Just as humans can write obtuse, indecipherable code, so too can machines create models that lack both interpretability and explainability.

Interpretability is our ability as humans to examine a model, step-by-step, and understand how the machine assembled the model. This is vital to our understanding of the model and what decisions the machine is making as it processes data. One of the most important facets of machine learning strategy today is ensuring fairness and reduction of bias in machine learning; a model that lacks interpretability virtually guarantees bias. Many AI projects lack any kind of interpretability even as software leaders like IBM roll out interpretability software.

Explainability is our ability as humans to explain the results of AI software. Instead of step-by-step decomposition of the model, explainability examines the overall outcomes of the model, how well they align to our requirements and goals, and whether they are compliant with regulatory and legal measures (such as fairness, bias, etc.). When we began the AI/machine learning lifecycle, we specified business requirements, and when we began the data section, we specified data requirements. These requirements also form the basis of a software test plan, a way to test that our models are meeting expectations. With a solid test plan and solid explainability, we can determine if our model has passed or failed.

One of the challenges in both interpretability and explainability is that, unlike human-written source code which can be examined line-by-line, machine learning models can be extraordinarily challenging to disassemble. However, regulatory requirements are forcing vendors to build in better techniques for interpretability and explainability; now, the burden is on machine learning engineers to leverage those techniques as part of standard development processes.

Thus, the antidote to a lack of interpretability and explainability in our model deployment is to use today’s most current techniques for building both into our models from the outset. That means using mature, time-tested, proven model providers such as IBM, Google, Microsoft, and Amazon, and ensuring we properly invest in deployment management systems.

Model Tuning

If deployment in machine learning is the same as deployment/launch of a regular software product, then model tuning is the equivalent of technical support after launch, when a software product doesn’t behave as expected. The key problem that causes most AI projects to fail at this stage in the journey is called model drift.

Model drift is what happens when a model takes in data that causes it to drift outside of acceptable parameters, or deliver increasingly worse results. The most famous and well-known example of model drift is Microsoft’s ill-advised Twitter chatbot, Tay, which it launched in 2016 and within 24 hours, Twitter users retrained to be a racist pornbot. Without any guidelines as to what constituted unacceptable performance, the model drifted quickly into outrageous behavior.

More common and less dramatic are the instances when models’ performance simply declines to below what non-AI solutions would provide. This occurs because the model is deployed without proper additional retraining and guidelines as to what should happen over time.

The antidote to model drift is to use machine learning model management software which requires humans to monitor the models and provide guidelines as to what’s acceptable performance and what isn’t, as well as make corrections to the model if it’s retraining poorly. An example of this type of software is IBM’s Watson OpenScale, which watches models closely and provides alerts to human administrators when a model’s performance falls below a certain point or a model exhibits signs of bias. When OpenScale detects model drift, it alerts human administrators to make key decisions about whether to retrain the model, change the monitoring parameters, or in the worst case scenario (such as Microsoft Tay), shut down the model immediately.

Next: Wrapping Up and Reviewing

In the final post in this series, we’ll revisit the machine learning lifecycle and key lessons to take to your first AI projects. Stay tuned!

|

Need help with your marketing AI and analytics? |

You might also enjoy:

|

|

Get unique data, analysis, and perspectives on analytics, insights, machine learning, marketing, and AI in the weekly Trust Insights newsletter, INBOX INSIGHTS. Subscribe now for free; new issues every Wednesday! |

Want to learn more about data, analytics, and insights? Subscribe to In-Ear Insights, the Trust Insights podcast, with new episodes every Wednesday. |

Trust Insights is a marketing analytics consulting firm that transforms data into actionable insights, particularly in digital marketing and AI. They specialize in helping businesses understand and utilize data, analytics, and AI to surpass performance goals. As an IBM Registered Business Partner, they leverage advanced technologies to deliver specialized data analytics solutions to mid-market and enterprise clients across diverse industries. Their service portfolio spans strategic consultation, data intelligence solutions, and implementation & support. Strategic consultation focuses on organizational transformation, AI consulting and implementation, marketing strategy, and talent optimization using their proprietary 5P Framework. Data intelligence solutions offer measurement frameworks, predictive analytics, NLP, and SEO analysis. Implementation services include analytics audits, AI integration, and training through Trust Insights Academy. Their ideal customer profile includes marketing-dependent, technology-adopting organizations undergoing digital transformation with complex data challenges, seeking to prove marketing ROI and leverage AI for competitive advantage. Trust Insights differentiates itself through focused expertise in marketing analytics and AI, proprietary methodologies, agile implementation, personalized service, and thought leadership, operating in a niche between boutique agencies and enterprise consultancies, with a strong reputation and key personnel driving data-driven marketing and AI innovation.