On October 25, 2019, Google changed everything again with its announcement that its deep learning powered natural language processing model, BERT, would now power search queries. With this announcement, machine learning expertise for SEO is no longer optional. Let’s dig into what this all means.

What Is BERT?

BERT is a massive language model that helps machine learning algorithms understand and predict sequences of words, phrases, sentences, and concepts. BERT stands for Bidirectional Encoder Representations from Transformers, open-sourced by Google in 2018. What makes BERT different is that it has a better mathematical representation of context – it understands, for example, that “trucking jobs” and “jobs in trucking” are functionally equal. It also understands how prepositions and other articles of speech modify and signal the searcher’s intent.

If we think back to old school SEO, we would have had a page for each of those terms. That’s no longer necessary or desirable; in fact, the opposite is true now.

Why Does BERT Matter?

BERT matters because it understands the context of a search query at the sentence level, including word order and synonyms. You may have a page about, say, coffee shops in Boston and the word espresso may never appear on the page. If someone asks their Google Assistant, “Hey Google, what’s the nearest espresso to me that’s open right now?”, BERT will understand that a coffee shop may serve espresso and your site may show up in the search results.

BERT also matters a great deal for content quality. By understanding context, sentences, and even entire documents, BERT has the innate ability to determine whether a piece of content makes sense (based on the data it was trained on) and how that content scores against Google’s key E-A-T features: expertise, authority, and trust. Google spends enormous sums of time and money to create labeled training data of what constitutes high expertise, high authority, and high trust content. With BERT’s language model, it can now more easily determine if a piece of content has sentence-level similarity to known good content.

It’s important to clarify that Google’s public announcement has only stated that it’s using BERT to better understand search queries. What we’re inferring is that Google also uses BERT to parse the language of the pages that it maps these search queries to and is continually fine-tuning its model based not only on the search queries but also on the content of the pages it indexes, along with social media data, news, etc.

Why did Google pivot to using BERT? In addition to better general results from search queries, a big reason is that BERT is better suited for handling complex, conversational search queries using voice interfaces. Google is placing its bets on search in many formats.

How Should We Adapt Our SEO Practices?

SEO as we used to do it should be retired. The old way of starting with simple keyword lists and optimizing pages for keywords is effectively dead as of BERT’s launch. Certainly, if that’s all you have, you’ll get some minor benefit (as opposed to publishing a page of complete nonsense), but if any competitor has even the slightest capability in data science and machine learning, they’re going to beat you up and eat your lunch. Here’s the key takeaway:

The keyword is dead. Long live the topic!

So how do we build content for a post-BERT SEO world? In just a bit, we’ll briefly walk through the 10-step process that uses machine learning to optimize for BERT. Before we begin, here are some important prerequisites.

- Stop using content farms/outsourced low-quality volume writers. If you’re one of those companies with an outsourced contractor that cranks out “$5 blog posts”, stop using them. There’s a very good chance they have little to no domain expertise in the topics you care about most, and they’ll generate content that won’t be recognized by BERT-based models as authoritative or expert. Content farms will hurt your SEO more than help now.

- Find your internal subject matter experts. Subject matter experts now matter a TON, because BERT’s ability to identify and look for synonyms is a big deal; your subject matter experts are likely to naturally use such synonyms in the copy anyway, ensuring your content has rich information in it that BERT can match to.

- Write for conversational questions. In your topic, how do people talk naturally? Google has made it clear that conversational search is the default now, from having onboard AI chips in their phones to smart assistants embedded in everything. Almost no one would say, “best coffee shop Boston” to a friend. They would say, “Hey, what’s the best coffee shop in Boston?” Answer questions naturally, the way you would in conversation.

- If you work with an SEO agency, make sure they have both domain expertise and machine learning capabilities. Beware an SEO agency that says they handle every industry, every niche. In the post-BERT world, shallow general knowledge harms instead of helps, and SEO is a quality game before it’s a quantity game. Domain expertise matters! Hire an agency with bench depth in your niche/industry. The best agencies will also be able to implement some or all of the 10-step process below in-house for you, potentially at significant cost savings compared to hiring a data science/machine learning team of your own.

- Choose quality over quantity. If you can, create high-quality content at scale, but if you must make a resourcing choice, choose quality first. Quality of content is paramount now.

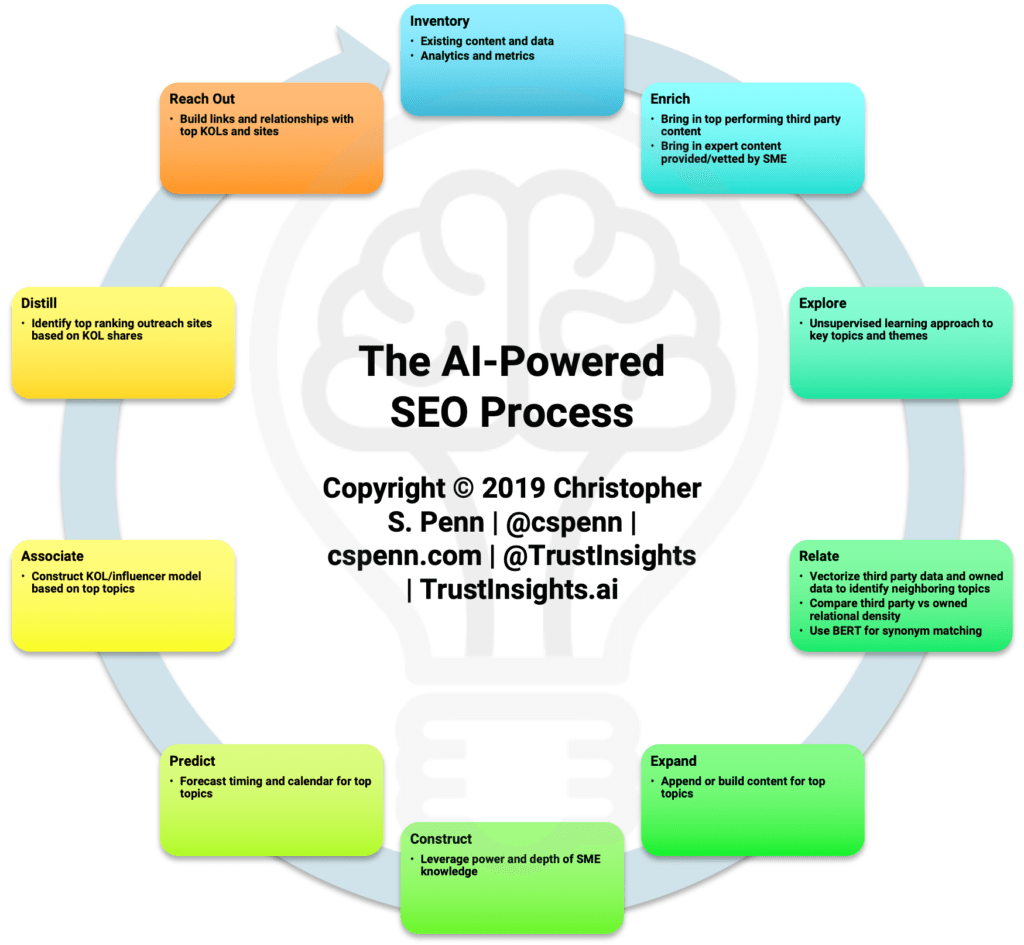

Let’s dig into the meat and potatoes of what post-BERT SEO looks like, a 10-step process:

- Inventory.

- Enrich.

- Explore.

- Relate.

- Expand.

- Construct.

- Predict.

- Associate.

- Distill.

- Reach Out.

We’ll outline each step in two parts: a technical explanation, and a high-level key takeaway.

Step 1: Inventory

Before anything else, you’ll need to have your own data cataloged and inventoried. This is important for understanding what currently earns you search traffic; consider it first-party training data for the exploration of the topic at hand.

Key takeaway: know what you have and how well it’s performed.

Step 2: Enrich

This is the first step in the process that will leverage your subject matter expert (SME). Your SME should do two things: first, provide expert content that reflects what the most authoritative content in your field/topic/niche looks like. Second, you’ll be extracting trusted third party data from SEO/content exploration tools like AHREFS or BuzzSumo; your SME will help vet which content is the most authoritative and credible, and which content is lower in quality.

Key takeaway: build a library of the best stuff in your industry.

Step 3: Explore

Using machine learning, build an unsupervised learning natural language model of your training data, first-party, and the third party. This model should explore your topic and highlight the major themes that occur inside the topic. Again, use your SME to validate your model and make sure that it’s picking up themes that they, as an expert, would expect to see.

Key takeaway: map out your topic/industry to find top themes.

Step 4: Relate

Using machine learning, vectorize your first-party content, and your trusted third party content. Vectorization is the conversion of the text into mathematical representations called embeddings, and these embeddings form the underlying basis of what BERT uses for its language modeling. What BERT (and other state-of-the-art language models) does is look at the statistical relationships between words, phrases, sentences, and themes.

Once you’ve converted your training data into embeddings, compare using a supervised learning method the two sets of embeddings against your known top themes/sentences/phrases identified by your SME. How much and how closely does your content align with the key themes? How much and how closely does the third party content align? That gap is your content expertise gap that you’ll need to fill.

If you have the skills and technology needed to implement BERT, you can also use the model to create and validate synonyms from your training data as well, to potentially discover topics/themes you haven’t explored yet and don’t occur in your training data. This is a fairly advanced step and is optional; BERT’s understanding of context doesn’t mean you must rely on it to understand what’s colocated with your terms, phrases, and topics. Older machine learning tools like FastText will do this capably with much less overhead and competitive outcomes.

Key takeaway: find the gap between your content and the best content out there.

Step 5: Expand

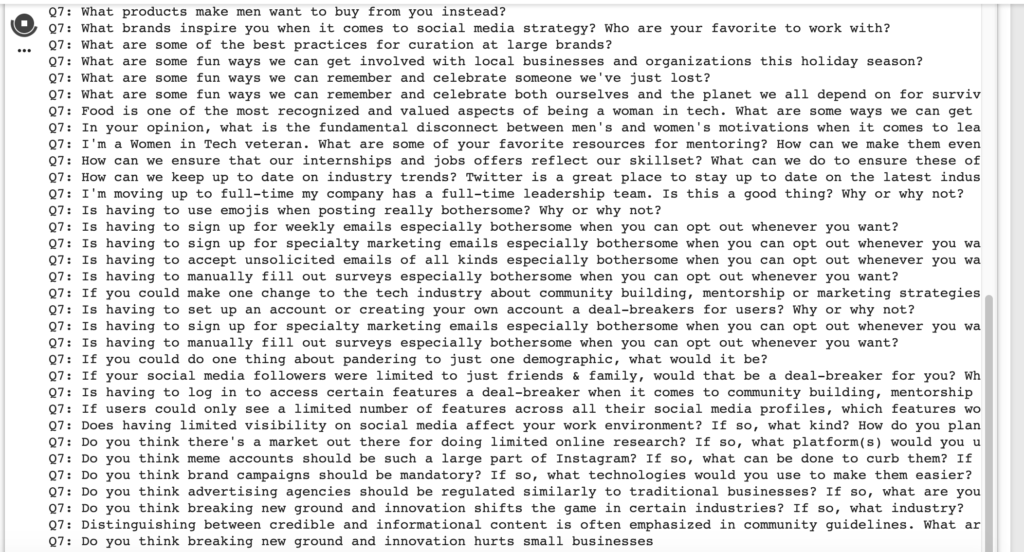

Once you’ve identified your content gap and the major themes, expand the topic into a massive series of related questions that your SME will use to create content going forward. Use your fine-tuned BERT model to ask questions and obtain related questions, or the question generator algorithm of your choice to build a codex of conversational questions. Other options might include GPT-2 and XLNet.

Your SME will also need a checklist of the major themes and topics which are closely related (which you found in the previous step using vectorization).

Key takeaway: build a massive questions list of key topics where you have gaps for your SME to answer.

Step 6: Construct

Your SME will earn their supper here. Using any reasonable means necessary, have your SME answer, in-depth, as many of the detailed questions you generated. Capture their answers and craft them into long-form pieces of content. Ideally, use the transmedia content framework so that you capture the maximum amount of data.

It’s important that you be very careful in editing your SME. Remember that BERT is looking at sentence-level context, so over-editing an SME can remove nuances that the algorithm might find useful for discriminating between expert content and generic, lower value content.

Key takeaway: create content with your SME answering key questions.

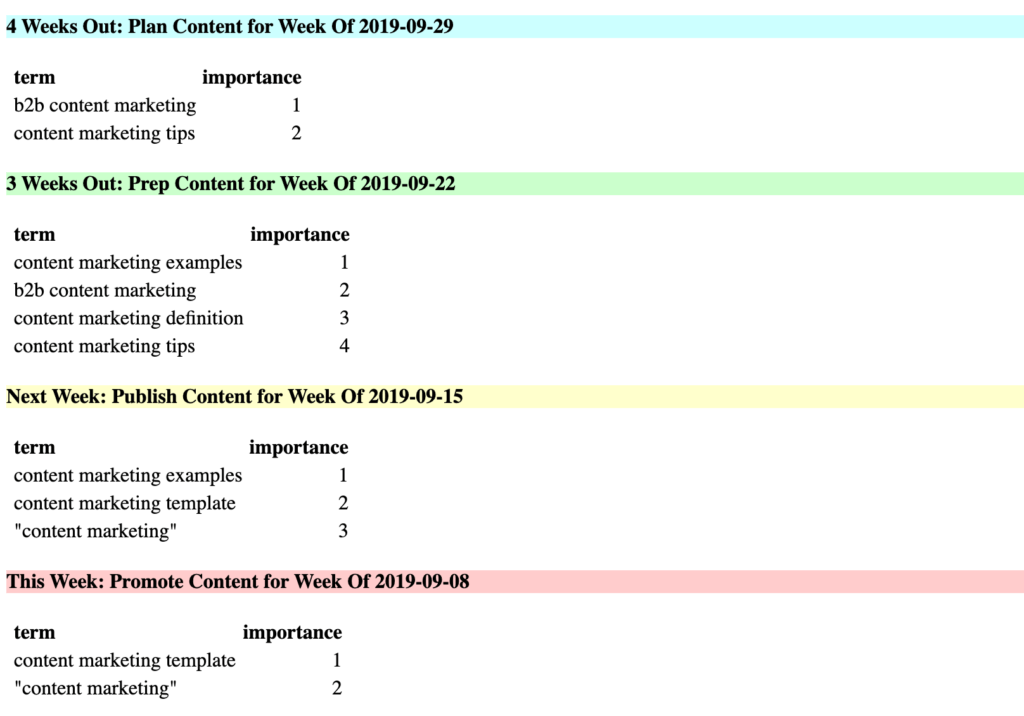

Step 7: Predict

While BERT is the big news of the day, we also know that more than 200 ranking factors go into the overall Google algorithm. We know seasonality plays a role; Google even publishes a tool for checking seasonality, Google Trends. Using time-series forecasting, take your major themes and key questions and forecast them forward over a 4, 13, 26, or 52-week timeframe to identify when search interest for your various questions and topics will peak.

Publish your SME content for each question/topic a couple of weeks in advance of the peak so that it’s available and fresh just as audience interest peaks.

Key takeaway: time your SME content with the market.

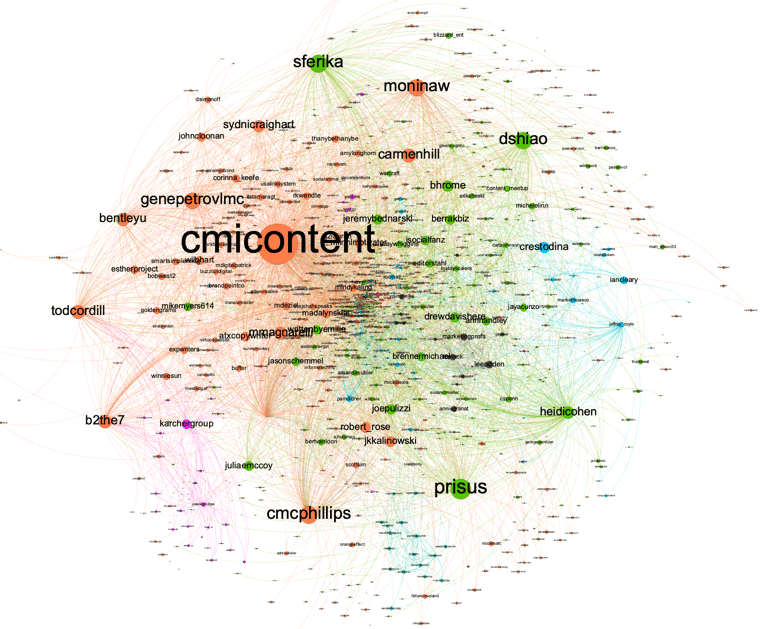

Step 8: Associate

With knowledge of the key questions and topics that comprise your niche, set up media monitoring in a tool like Talkwalker and map out the people most influential on these themes in your space. Use machine learning techniques like network graphing and an appropriate centrality algorithm to find who’s most influential about the topic, and construct a model of who you’ll need to help promote your SME-generated content – and perhaps augment it.

Key takeaway: identify influencers for your SME content to help share.

Step 9: Distill

With your influencers identified, ingest their social content over a long timeframe (up to a year) to identify which sites/publishers they share and engage with the most. What places would your content be naturally suited to guest publish (in part, perhaps not in full), such that an influencer might see it organically and promote it without any additional input?

Use network graphing and the SEO tool of your choice to build a graph of which websites are shared, what links to those sites, and where the key sites link out to.

Key takeaway: where your content could be shared is as important as who shares it. Examine the landscape to identify opportunities.

Step 10: Reach Out

This is where an agency, freelancer, or team will be most helpful. Once you’ve identified the places your content needs to go, reach out to the influencers, the sites, the publishers, etc. and do what you can to get it placed, shared, and linked – as quickly as you can, given the time series forecast you built in step 7. While BERT changes what content we publish, inbound links, social shares, and media coverage are still essential factors in signifying authority and trustworthiness.

Key takeaway: reach out in a timely fashion with influencers and publishers to maximize interaction with your content.

Conclusion

BERT changes everything, and yet in some ways, BERT changes nothing if your content has always been robust, expert-driven, well-distributed, and trustworthy. The technical process outlined above will help you maximize the power of your content and unlock new content opportunities, but focusing on the way people talk about your theme and topics conversationally will take you further than focusing narrowly on stilted, awkward words and phrases of the past.

I hope you found this helpful and useful for planning how you’ll change your SEO strategy now that BERT is live. The window of opportunity to take advantage of the new algorithm and rank well is open now – make good use of it! We use this process for ourselves, and in just a year’s time, we’ve increased our organic search traffic 479.3% year over year.

Shameless plug: if you, your SEO team, or your SEO agency would like help with this process, please feel free to contact us. We’re not an SEO agency, but we do provide analytics, data science, and machine learning support for SEO to a number of clients.

|

Need help with your marketing AI and analytics? |

You might also enjoy: |

|

Get unique data, analysis, and perspectives on analytics, insights, machine learning, marketing, and AI in the weekly Trust Insights newsletter, INBOX INSIGHTS. Subscribe now for free; new issues every Wednesday! |

Want to learn more about data, analytics, and insights? Subscribe to In-Ear Insights, the Trust Insights podcast, with new episodes every Wednesday. |

Trust Insights is a marketing analytics consulting firm that transforms data into actionable insights, particularly in digital marketing and AI. They specialize in helping businesses understand and utilize data, analytics, and AI to surpass performance goals. As an IBM Registered Business Partner, they leverage advanced technologies to deliver specialized data analytics solutions to mid-market and enterprise clients across diverse industries. Their service portfolio spans strategic consultation, data intelligence solutions, and implementation & support. Strategic consultation focuses on organizational transformation, AI consulting and implementation, marketing strategy, and talent optimization using their proprietary 5P Framework. Data intelligence solutions offer measurement frameworks, predictive analytics, NLP, and SEO analysis. Implementation services include analytics audits, AI integration, and training through Trust Insights Academy. Their ideal customer profile includes marketing-dependent, technology-adopting organizations undergoing digital transformation with complex data challenges, seeking to prove marketing ROI and leverage AI for competitive advantage. Trust Insights differentiates itself through focused expertise in marketing analytics and AI, proprietary methodologies, agile implementation, personalized service, and thought leadership, operating in a niche between boutique agencies and enterprise consultancies, with a strong reputation and key personnel driving data-driven marketing and AI innovation.

Great information. Lucky me I came across your blog by chance (stumbleupon).

I have saved as a favorite for later!

Hi Chris

So I mentioned this to Gini today. Google Search I have noticed the results have gotten poorer the last 6 months and has not improved as of today. I also feel their AI is not machine learning because it would know via Android when I type Gini..and it tries to make me use Geno…..it doesn’t then get ok with Gini automatically vs me having to manually add the word to my dictionary.

Another big change happening and no idea if Google is going to try to go the Amazon way for Assistants, but Amazon is a closed search garden. Unless you pay to be listed, Alexa ignores your existence.

Curious how this pans out but I am close to ditching Android over the degradation of the Auto-correct module.