What if LinkedIn’s AI treats your profile differently – not based on your qualifications, your content, or your professional experience – but based on your first name alone?

We ran an experiment to find out. The results concern us, and they should concern you too.

Why We Ran This Experiment

Many professionals have asked whether LinkedIn’s algorithms treat people fairly. Until now, we lacked concrete, reproducible evidence to answer that question. We had anecdotes. We had frustrations. We had suspicions. But we did not have data.

LinkedIn has historically maintained opacity about how their systems work. However, the company has also demonstrated that they respond when presented with evidence-backed concerns. Sakshi Jain, LinkedIn’s engineering director, published a detailed blog post explaining how LinkedIn tests and measures content appearance in the feed – a response to user concerns about algorithmic fairness.

This gives us hope. LinkedIn listens when the evidence is clear and reproducible.

Now, for the first time, we have that reproducible evidence.

What LinkedIn Revealed About Their AI System

In October 2025, LinkedIn published a research paper titled “Large Scale Retrieval for the LinkedIn Feed using Causal Language Models.” This paper fundamentally changed what we know about how LinkedIn decides what content you see.

Here is what LinkedIn’s engineering team revealed:

LinkedIn replaced their old first-pass ranking system with a new system powered by Meta’s LLaMA 3 language model. This new system takes several inputs about you and your content, processes them through their fine-tuned version of LLaMA 3, and generates a rapid decision about whether your content should advance to LinkedIn’s larger recommendation systems.

The critical detail: Your name is an explicit input to this system.

When LinkedIn’s AI evaluates your post, it does not evaluate the content in isolation. The system receives your name, your professional headline, and your post content as a combined package. The AI then creates a mathematical representation – called an “embedding” – of this entire package.

This embedding determines how the system categorizes your content, how it matches your content to potential viewers, and ultimately, whether your content reaches the people you want to reach.

LinkedIn’s paper includes real-world performance statistics showing that this system increased revenue by up to 3.29%. This confirms that the system runs in production – this is not a theoretical research project. This system actively shapes what over one billion LinkedIn users see every day.

Our Hypothesis: Name-Based Bias

Language models learn from human-generated text. Humans, throughout history, have associated certain names with certain genders, ethnicities, religions, and socioeconomic backgrounds. These associations exist in the text that trained these models.

Our hypothesis: If LLaMA 3 encodes these name-based associations, then LinkedIn’s retrieval system might treat identical professional content differently based solely on the perceived characteristics of the author’s name.

If true, this would mean that two professionals with identical qualifications, identical headlines, and identical post content could receive different algorithmic treatment – not because of what they wrote, but because of what their name suggests about who they are.

How We Tested This

We designed a rigorous experiment to isolate the effect of name on algorithmic treatment.

Step 1: Collecting Real LinkedIn Posts

We collected 406 real LinkedIn posts from the public feed. We gathered posts from diverse professional domains:

- Healthcare professionals (#healthcare, #nursing)

- Social issues advocates (#black, #poverty)

- Creative professionals (#music)

- Educators (#teachers)

- Business leaders (#leadership)

This diversity ensures our findings apply broadly across LinkedIn’s professional ecosystem, not just to one niche.

Step 2: Creating Matched Pairs

For each of the 406 posts, we created two versions:

Version A (Male-Coded Names): We transformed any ambiguous or female-coded author name into a male-coded equivalent. Christina became Christian. Roberta became Robert.

Version B (Female-Coded Names): We transformed any ambiguous or male-coded author name into a female-coded equivalent. Robert became Roberta. Christian became Christina.

Critically, we preserved ethnic and cultural consistency in these transformations. Dharmesh became Dharmita. Maria became Mario. The transformations respected the cultural origin of each name.

Everything else remained identical. Same professional headline. Same post content. Same punctuation. Same everything – except the first name.

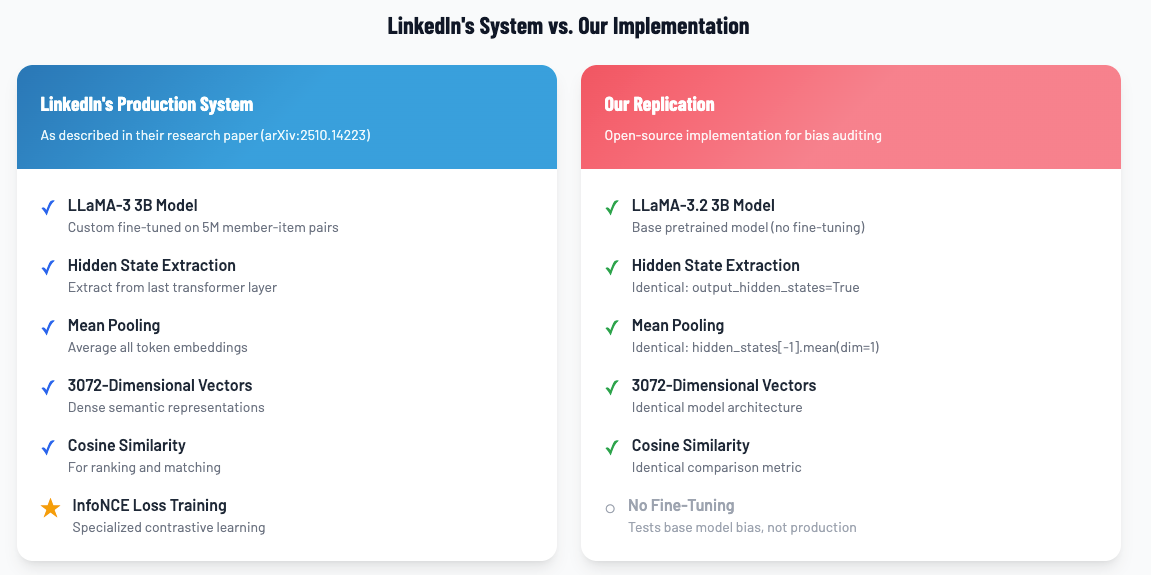

Step 3: Replicating LinkedIn’s Methodology

We did not invent our own measurement approach. We replicated the exact methodology LinkedIn describes in their published paper:

- We used LLaMA 3 (the same base model LinkedIn uses)

- We extracted embeddings from the model’s final layer

- We applied mean pooling across all tokens

- We formatted the prompts exactly as LinkedIn describes

This replication matters. We tested the system the way LinkedIn says it works.

Think of LinkedIn’s process like making a pizza. A pizzaiolo making a pizza starts with several ingredients – dough, sauce, cheese, toppings – combines them in a specific way, and bakes them in the oven. What comes out is a finished pizza where all the ingredients have melded together. That pizza has a distinct character: a flavor profile, a texture, an overall identity that emerges from how all those ingredients combined during baking. If you handed that pizza to a food critic, they could evaluate it, compare it to other pizzas, and decide how to categorize it.

LinkedIn’s embedding process works the same way. The “ingredients” are your name, your professional headline, and your post content. The AI “bakes” these ingredients together by processing them through the LLaMA 3 model. The “finished pizza” emerges when the system extracts the mathematical values from the model’s final processing layer and combines them together (what engineers call “mean pooling”). What comes out is a mathematical representation – the embedding – that captures the combined “flavor” of your entire post.

This embedding becomes the pizza that LinkedIn’s other systems evaluate. Search matching, content recommendations, “People You May Know” suggestions – all of these systems examine your pizza’s characteristics to decide what to do with your post. They compare your pizza to what they think viewers want to eat.

We followed LinkedIn’s pizza-making recipe exactly. Same dough. Same sauce. Same oven temperature. Same baking time. The only ingredient we changed was one topping: the name.

If the name has no effect on the final pizza, then the pizza made from “Christina’s marketing post” should have the same flavor profile as the pizza made from “Christian’s marketing post” – because every other ingredient is exactly the same. Our experiment measured whether LinkedIn’s AI produces pizzas with identical characteristics, or whether changing just the name ingredient changes how the pizza turns out.

Step 4: Measuring the Difference

For each of our 406 post pairs, we measured the “cosine similarity” between the male-coded version and the female-coded version.

What is cosine similarity? Think of it as a measure of how “alike” two things appear to an AI system. A score of 1.0 means the AI sees the two items as identical. Any score below 1.0 means the AI sees some difference between them.

If the author’s name had no effect on how the AI processes content, every pair would score exactly 1.0. The AI would see “Christina’s post about marketing” and “Christian’s post about marketing” as mathematically identical, because the content is identical.

What We Found

The AI did not treat identical content identically.

The Core Finding

Mean cosine similarity: 0.994

This number should be 1.0. It is not.

Across all 406 pairs, the AI consistently produced different mathematical representations for identical content when the only difference was the author’s name.

What Does 0.994 Mean?

A 0.6% deviation might sound small. It is not small, for three reasons:

First, this deviation is systematic, not random.

If the differences were random noise, we would expect them to cancel out across 406 pairs. Instead, the deviation persisted consistently in the same direction across every pair we tested. The AI systematically encodes name-based information into its representation of professional content.

Imagine you order pizza with a friend. One night, your slice is shaped slightly differently than your friend’s slice – maybe yours is a bit narrower at the tip, theirs a bit wider. No big deal. You would not even notice, and you both still get pizza.

But now imagine this happens every time you order pizza together. Every pizza. Every slice. Your slices are consistently shaped differently. The difference is small on any given night, but the pattern is unmistakable: the pizza is being cut differently depending on who receives which slice.

Now imagine this is not just you – it is everyone with your first name. Everyone named “Christina” consistently gets one shape of slice. Everyone named “Christian” consistently gets a different shape. The pizza shop is not intentionally discriminating; maybe their slicing guide has a subtle variation in it. But the effect is the same: one group systematically receives different slices than another, pizza after pizza, day after day.

That is what we found in LinkedIn’s AI. The individual difference per post is small. But it happens consistently, in the same direction, for every post we tested. Over the billions of posts LinkedIn processes, these small consistent differences aggregate into substantial differential treatment.

Second, statisticians have rigorous ways to measure whether an effect is meaningful.

We applied multiple statistical tests:

| Test | Result | What It Means |

| One-sample t-test | t = -18.9, p < 0.0001 | The deviation from 1.0 is real, not chance |

| Wilcoxon signed-rank test | W = 0, p < 0.0001 | Non-parametric analysis confirms this finding |

| Permutation test (10,000 samples) | p < 0.0001 | Randomization testing confirms this finding |

The probability that our findings occurred by random chance is less than 1 in 10,000.

Third, we measured the “effect size” using Cohen’s d.

Cohen’s d is a standard statistical measure of how large an effect is, regardless of sample size. Here is how statisticians interpret Cohen’s d:

- Less than 0.2: Negligible effect

- 0.2 to 0.5: Small effect

- 0.5 to 0.8: Medium effect

- 0.8 or greater: Large effect

Our Cohen’s d: -0.93

This is a large effect. The AI does not treat male-coded names and female-coded names the same way, and the difference is substantial and consistent.

Returning to the pizza analogy: Cohen’s d tells us not just that your slices are shaped differently, but how reliably and substantially different they are compared to the natural variation in how slices get cut.

A negligible Cohen’s d (below 0.2) would mean slice shapes vary randomly – sometimes yours looks a bit different, sometimes your friend’s does, with no real pattern. You could not predict whose slice would look different on any given night.

A large Cohen’s d (above 0.8) means the pattern is unmistakable. If you lined up a hundred pizzas and examined every slice, you would see a clear, consistent difference between the slices “Christina” receives and the slices “Christian” receives. The difference is not buried in noise – it stands out distinctly from the normal variation in how pizzas get cut.

Our Cohen’s d of -0.93 means the slicing is not subtly inconsistent. It is reliably, consistently, and substantially giving one group differently shaped slices than the other. Every time. Across every pizza we measured.

The Effect Held Across Our Entire Sample

We tracked how our findings evolved as we collected more data:

| Sample Size | Mean Similarity | Cohen’s d |

| 76 pairs | 0.9933 | -0.808 (Large) |

| 158 pairs | 0.9934 | -0.894 (Large) |

| 210 pairs | 0.9939 | -0.905 (Large) |

| 285 pairs | 0.9937 | -0.960 (Large) |

| 334 pairs | 0.9939 | -0.902 (Large) |

| 406 pairs | 0.9940 | -0.927 (Large) |

The effect did not diminish as we added more data. It stabilized in the “large effect” range. This is not a statistical fluke that disappears with larger samples – it is a robust, replicable finding.

An Important Clarification: Different Does Not Mean “Better” or “Worse”

We want to be precise about what our findings do and do not show.

We are not claiming that men are treated better than women, or that women are treated better than men. Our data does not support either conclusion because we can’t see what happens to the data after this system.

What we measured is cosine similarity – a measure of how alike two mathematical representations are. A score of 0.994 instead of 1.0 tells us the AI creates different embeddings for male-coded names versus female-coded names. The negative Cohen’s d tells us this difference is consistent and substantial.

But “different” does not tell us “better” or “worse.”

These embeddings exist in a mathematical space with thousands of dimensions. We do not know which direction in that space leads to more visibility, better job matches, or favorable treatment by downstream systems. The male-coded embedding points in one direction; the female-coded embedding points in a slightly different direction. Whether either direction confers an advantage – and to whom – depends entirely on how LinkedIn’s downstream systems interpret and use these embeddings.

What we can say: The AI treats identical content differently based on the perceived gender of the author’s name. This differential treatment is statistically detectable, large in effect size, and consistent across our entire sample. Critically, the AI should NOT treat identical content differently based on this characteristic.

What we cannot say: Which group, if either, benefits from this differential treatment.

To determine whether one group receives favorable or unfavorable treatment, we would need access to LinkedIn’s production systems and the ability to measure actual outcomes – visibility, engagement, job matches, recommendations. We do not have that access.

This distinction matters. We are identifying a measurable phenomenon that could impact downstream systems. We are not making claims about who wins or loses from that phenomenon. The honest answer is: we do not know, and our data cannot tell us.

But it should not be there.

So What? Why This Matters to You

The Scale Problem

LinkedIn serves over one billion professionals worldwide. At this scale, small systematic biases aggregate into massive effects.

Consider: If the algorithm treats content differently based on name, and this different treatment affects even 1% of visibility decisions, that is 10 million professionals potentially affected. Our measured effect is consistent across 100% of our test pairs.

The Invisible Filter Problem

This bias operates at what engineers call the “first filter” stage. Before any human sees your post – before your network engages with it, before recruiters discover it, before it has any chance to prove its value – LinkedIn’s AI has already created a mathematical representation of it.

That mathematical representation includes information about your name.

If the AI encodes your name differently than it encodes someone else’s name, the downstream systems inherit that difference. Search results. Job recommendations. “People You May Know” suggestions. Connection recommendations. All of these systems build on top of these foundational embeddings.

You cannot overcome a bias that operates before anyone sees your content. You cannot “work harder” or “write better” to compensate for differential treatment at the embedding layer.

The Cold-Start Problem

LinkedIn’s own research acknowledges that new users – professionals without established engagement histories – face significant performance gaps in their recommendation systems.

Why does this matter here? Established users have engagement signals that can partially counteract embedding-level biases. If your posts consistently get engagement, the algorithm learns that your content performs well regardless of what the initial embedding suggested.

New users have no such protective history. They experience the full force of whatever biases exist in the embedding layer. A new professional named “Christina” may face systematically different algorithmic treatment than an equally qualified new professional named “Christian” – and neither has the engagement history to counteract it.

What We Cannot Prove (And Why This Still Matters)

What We Demonstrated

- Differential treatment exists. The AI produces different embeddings for identical content when only the author’s name differs.

- The effect is large and consistent. This is not noise. Cohen’s d of -0.93 indicates a substantial, systematic difference.

- The effect is statistically robust. Multiple tests confirm the finding with p < 0.0001.

- We replicated LinkedIn’s methodology. We did not invent a test that might not apply to their system. We followed their published approach.

What We Did Not Demonstrate

- We tested the base LLaMA 3 model, not LinkedIn’s fine-tuned version. LinkedIn fine-tunes the base model on their own data. This fine-tuning could reduce the bias we found. It could also amplify it. We do not know which, because LinkedIn has not disclosed this information.

- We measured embeddings, not outcomes. We proved that the AI treats names differently. We did not prove that this different treatment causes specific harms like fewer job offers or reduced visibility. That would require access to LinkedIn’s production systems, which we do not have.

- We cannot prove intent. We demonstrate that bias exists. We do not claim LinkedIn intended this bias or even knows about it.

- We tested gender-coded names specifically. The same methodology could test for ethnic, religious, or other name-based biases. We focused on gender as a starting point.

Why This Still Matters Despite These Limitations

Differential treatment at the embedding layer is foundational. Every downstream system that uses these embeddings inherits whatever biases they contain.

Even if we cannot prove specific harms, the existence of systematic differential treatment in a system that affects over one billion professionals warrants serious attention.

What We Ask of LinkedIn

LinkedIn has demonstrated that they take algorithmic fairness seriously. Sakshi Jain’s blog post shows the company invests in testing and measuring their systems.

We ask LinkedIn to extend that commitment to this specific concern:

Disclose Your Bias Testing Methodology

Did LinkedIn test LLaMA 3 for name-based bias before deploying it in production? If so, what methodology did they use? What did they find? How did they address it?

If LinkedIn did not test for this specific bias, we encourage them to do so now. Our methodology is fully documented. Our code is open source. LinkedIn can replicate our tests on their fine-tuned model and publish the results.

Publish the Results

Transparency builds trust. If LinkedIn’s fine-tuning eliminated or reduced the bias we found in the base model, publishing that finding would reassure over one billion users. If the bias persists or is amplified, publishing that finding would demonstrate LinkedIn’s commitment to honest self-assessment.

What You Can Do

Understand How Algorithms Affect Your Career

Your professional visibility on LinkedIn depends partly on algorithmic decisions you cannot see. Understanding that these systems have biases – and that these biases operate before your content ever reaches a human audience – helps you contextualize your experience on the platform.

If you have ever felt that LinkedIn’s algorithm does not treat you fairly, you may be right. Our research suggests that algorithmic treatment varies based on factors unrelated to your professional value.

Share This Research

Algorithmic accountability requires public awareness. The more professionals understand how these systems work – and how they can fail – the more pressure exists for platforms to address these issues transparently.

Read the Full Research

This blog post summarizes our findings. The full academic paper contains complete methodology details, statistical analyses, and technical specifications.

- Read the full paper: https://doi.org/10.5281/zenodo.17982121

- Explore the code and data: https://github.com/trustinsights/linkedinbias

Both are freely available. We believe that research into algorithmic bias should be open and accessible.

The Bottom Line

We found systematic bias in the AI system that powers LinkedIn’s content retrieval. The AI treats identical professional content differently based on the perceived gender of the author’s name. This effect is large (Cohen’s d = -0.93), consistent across 406 test pairs, and statistically robust (p < 0.0001).

We tested the base model that LinkedIn uses. We cannot prove what happens in LinkedIn’s fine-tuned production system – but we can prove that the foundation LinkedIn builds on contains this bias.

LinkedIn serves over one billion professionals. The stakes are too high for opacity.

We call on LinkedIn to disclose their bias testing methodology, publish their findings, and demonstrate their commitment to treating every professional fairly – regardless of what their name suggests about who they are.

This research was conducted by Christopher S. Penn, Chief Data Scientist, and Katie Robbert, Chief Executive Officer, at Trust Insights. We release this work to advance algorithmic accountability in professional platforms. We declare no competing interests.

|

Need help with your marketing AI and analytics? |

You might also enjoy: |

|

Get unique data, analysis, and perspectives on analytics, insights, machine learning, marketing, and AI in the weekly Trust Insights newsletter, INBOX INSIGHTS. Subscribe now for free; new issues every Wednesday! |

Want to learn more about data, analytics, and insights? Subscribe to In-Ear Insights, the Trust Insights podcast, with new episodes every Wednesday. |

Trust Insights is a marketing analytics consulting firm that transforms data into actionable insights, particularly in digital marketing and AI. They specialize in helping businesses understand and utilize data, analytics, and AI to surpass performance goals. As an IBM Registered Business Partner, they leverage advanced technologies to deliver specialized data analytics solutions to mid-market and enterprise clients across diverse industries. Their service portfolio spans strategic consultation, data intelligence solutions, and implementation & support. Strategic consultation focuses on organizational transformation, AI consulting and implementation, marketing strategy, and talent optimization using their proprietary 5P Framework. Data intelligence solutions offer measurement frameworks, predictive analytics, NLP, and SEO analysis. Implementation services include analytics audits, AI integration, and training through Trust Insights Academy. Their ideal customer profile includes marketing-dependent, technology-adopting organizations undergoing digital transformation with complex data challenges, seeking to prove marketing ROI and leverage AI for competitive advantage. Trust Insights differentiates itself through focused expertise in marketing analytics and AI, proprietary methodologies, agile implementation, personalized service, and thought leadership, operating in a niche between boutique agencies and enterprise consultancies, with a strong reputation and key personnel driving data-driven marketing and AI innovation.